AI and automation are making development quick and affordable. Now, the future belongs to teams that learn as fast as they build.

Building software takes patience and persistence. Projects run long, budgets stretch thin, and crossing the finish line often feels like survival. If we launch something that works, we call it a win.

That rhythm has defined the industry for decades. But now, the tempo is changing. Kevin Kelly, the founding executive editor of Wired Magazine, once said, “Great technological innovations happen when something that used to be expensive becomes cheap enough to waste.”

AI-assisted coding and automation are eliminating the bottlenecks of software development. What once took months or years can now be delivered in days or weeks. Building is no longer the hard part. It’s faster, cheaper, and more accessible than ever.

Now, as more organizations can build at scale, custom software becomes easier to replicate, and its ROI as a competitive advantage grows less predictable. As product differentiation becomes more difficult to maintain, a new source of value emerges: applied learning, how effectively teams can build, test, adapt, and prove what works.

This new ROI is not predicted. It depends on the ability to:

- Build faster to test ideas in the real world.

- Learn faster from data, feedback, and outcomes.

- Adapt faster to turn proven insights into scalable solutions.

The organizations that succeed will learn faster from what they build and build faster from what they learn.

From Features to Outcomes, Speculation to Evidence

Agile transformed how teams build software. It replaced long project plans with rapid sprints, continuous delivery, and an obsession with velocity. For years, we measured progress by how many features we shipped and how fast we shipped them.

But shipping features doesn’t equal creating value. A feature only matters if it changes behavior or improves an outcome, and many don’t. As building gets easier, the hard part shifts to understanding which ideas truly create impact and why.

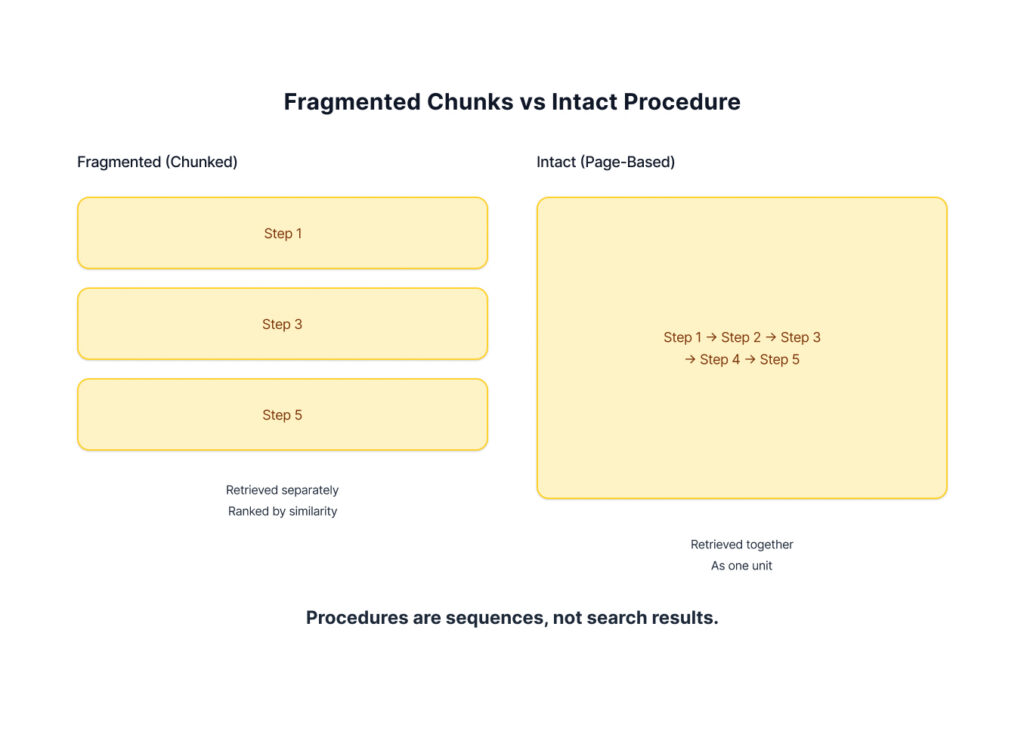

AI-assisted and automated development now make that learning practical. Teams can generate several variations of an idea, test them quickly, and keep only what works best. The work of software development starts to look more like controlled experimentation.

This changes how we measure success. The old ROI models relied on speculative forecasts and business cases built on assumptions about value, timelines, and adoption. We planned, built, and launched, but when the product finally reached users, both the market and the problem had already evolved.

Now, ROI becomes something we earn through proof. We begin with a measurable hypothesis and build just enough to test it:

If onboarding time falls by 30 percent, retention will rise by 10 percent,

creating two million dollars in annual value.

Each iteration provides evidence. Every proof point increases confidence and directs the next investment. In this way, value creation and validation merge, and the more effectively we learn, the faster our return compounds.

ROI That Compounds

ROI used to appear only after launch, when the project was declared “done.” It was calculated as an academic validation of past assumptions and decisions. The investment itself remained a sunk cost, viewed as money spent months ago.

In an outcome-driven model, value begins earlier and grows with every iteration. Each experiment creates two returns: the immediate impact of what works and the insight gained from what doesn’t. Both make the next round more effective.

Say you launched a small pilot with ten users. Within weeks, they’re saving time, finding shortcuts, and surfacing friction you couldn’t predict on paper. That feedback shapes the next version and builds the confidence to expand to a hundred users. Now, you can measure quantitative impact, like faster response times, fewer manual steps, and higher satisfaction. Pay off rapidly scales, as the value curve steepens with each round of improvement.

Moreover, you are collecting measurement on return continuously, using each cycle’s results as evidence to justify the next. In this way, return becomes the trigger for further investment, and the faster the team learns, the faster the return accelerates.

Each step also leaves behind a growing library of reusable assets: validated designs, cleaner data, modular components, and refined decision logic. Together, these assets make the organization smarter and more efficient with each cycle.

When learning and value grow together, ROI becomes a flywheel. Each iteration delivers a product that’s smarter, a team that’s sharper, and an organization more confident in where to invest next. To harness that momentum, we need reliable ways to measure progress and prove that value is growing with every step.

Measuring Progress in an Outcome-Driven Model

When ROI shifts from prediction to evidence, the way we measure progress has to change. Traditional business cases rely on financial projections meant to prove that an investment would pay off. In an outcome-driven model, those forecasts give way to leading indicators collected in real-time.

Instead of measuring progress by deliverables and deadlines, we use signals that show we’re moving in the right direction. Each iteration increases confidence that we are solving the right problem, delivering the right outcome, and generating measurable value.

That evidence evolves naturally with the product’s maturity. Early on, we look for behavioral signals, or proof that users see the problem and are willing to change. As traction builds, we measure whether those new behaviors produce the desired outcomes. Once adoption scales, we track how effectively the system converts those outcomes into sustained business value.

You can think of it as a chain of evidence that progresses from leading to lagging indicators:

Behavioral Change → Outcome Effect → Monetary Impact

The challenge, then, is to create a methodology that exposes these signals quickly and enables teams to move through this progression with confidence, learning as they go. This process conceptually follows agile, but changes as the product evolves through four stages of maturity:

Explore & Prototype → Pilot & Validate → Scale & Optimize → Operate & Monitor

At each stage, teams iteratively build, test, and learn, advancing only when success is proven. What gets built, how it’s measured, and what “success” means evolve as the product matures. Early stages emphasize exploration and learning; later stages focus on optimizing outcomes and capturing value. Each transition strengthens both evidence that the product works and confidence in where to invest next.

1. Explore & Prototype:

In the earliest stage, the goal is to prove potential. Teams explore the problem space, test assumptions, and build quick prototypes to expose what’s worth solving. The success measures are behavioral: evidence of user willingness and intent. Do users engage with early concepts, sign up for pilots, or express frustration with the current process? These signals de-risk demand and validate that the problem matters.

The product moves to the next stage only with a clear, quantified problem statement supported by credible behavioral evidence. When users demonstrate they’re ready for change, the concept is ready for validation.

2. Pilot & Validate:

Here’s where a prototype turns into a pilot to test whether the proposed solution actually works. Real users perform real tasks in limited settings. The indicators are outcome-based. Can people complete tasks faster, make fewer errors, or reach better results? Each of these metrics ties directly to the intended outcome that the product aims to achieve.

To advance from this stage, the pilot must show measurable progress towards the outcome. When that evidence appears, it’s time to expand.

3. Scale & Optimize:

As adoption grows, the focus shifts from proving the concept to demonstrating outcomes and refining performance. Every new user interaction generates evidence that helps teams understand how the product creates impact and where it can improve.

Learning opportunities emerge from volume. Broader usage reveals edge cases, hidden friction points, and variations that allow teams to refine the experience, calibrate models, automate repetitive tasks, and strengthen outcome efficacy.

At this stage, value indicators connect usage to business KPIs like faster response times, higher throughput, improved satisfaction, and lower support costs. This is where value capture compounds. As more users adopt the product, the value they generate accumulates, proving that the system delivers significant business impact.

The product reaches the next level of maturity when it shows sustained reliable impact to outcome measures across wide-spread usage.

4. Operate & Monitor:

In the final stage, the emphasis shifts from optimization to observation. The system is stable, but the environment and user needs continue to evolve and erode effectiveness over time. The goal is twofold: ensure that value continues to be realized and detect the earliest signals of change.

The indicators now focus on sustained ROI and performance integrity. Teams track metrics that show ongoing return (cost savings, revenue contribution, efficiency gains) while monitoring usage patterns, engagement levels, and model accuracy.

When anomalies appear (drift in outcomes, declining engagement, or new behaviors), they become the warning signs of changing user needs. Each anomaly hints at a new opportunity and loops the team back into exploration. This begins the next cycle of innovation and validation.

From Lifecycle to Flywheel: How ROI Becomes Continuous

Across these stages, ROI becomes a continuous cycle of evidence that matures alongside the product itself. Each phase builds on the one before it.

- Explore & Prototype creates early confidence that the problem is worth solving.

- Pilot & Validate proves that the solution works.

- Scale & Optimize demonstrates measurable outcomes while capturing real business value.

- Operate & Monitor sustains that value capture and reveals where the next cycle begins.

Together, these stages form a closed feedback loop—or flywheel—where evidence guides investment. Every dollar spent produces both impact and insight, and those insights direct the next wave of value creation. The ROI conversation shifts from “Do you believe it will pay off?” to “What proof have we gathered, and what will we test next?”

From ROI to Investment Upon Return

AI and automation have made building easier than ever before. The effort that once defined software development is no longer the bottleneck. What matters now is how quickly we can learn, adapt, and prove that what we build truly works.

In this new environment, ROI becomes a feedback mechanism. Returns are created early, validated often, and reinvested continuously. Each cycle of discovery, testing, and improvement compounds both value and understanding, and creates a lasting continuous advantage.

This requires a mindset shift as much as a process shift. From funding projects based on speculative confidence in a solution, to funding them based on their ability to generate proof. When return on investment becomes investment upon return, the economics of software change completely. Value and insight grow together. Risk declines with every iteration.

When building becomes easy. Learning fast creates the competitive advantage.

The pace of AI change can feel relentless with tools, processes, and practices evolving almost weekly. We help organizations navigate this landscape with clarity, balancing experimentation with governance, and turning AI’s potential into practical, measurable outcomes. If you’re looking to explore how AI can work inside your organization—not just in theory, but in practice—we’d love to be a partner in that journey. Request an AI briefing.

The New Equations

- Predictive ROI → Evidential ROI

- Features as Value → Outcomes as Value

- Delivery Success → Learning Success

- Fixed Scope → Scaled Confidence

- Return on Investment → Return on Insight

Key Takeaways

- AI-assisted development has made building software fast, affordable, and repeatable, shifting the value equation toward validation and learning.

- Evidential ROI replaces predictive ROI, using proof over projection to guide investment and strategy.

- Iterative learning turns every sprint into calibration, where teams advance by testing, validating, and refining in real time.

- Return on Learning measures how fast teams adapt and evolve, while Return on Ecosystem tracks how insights spread across an organization.

- The new competitive advantage lies in learning speed, not build speed. Those who learn faster deliver greater long-term value.

FAQs

What does “software cheap enough to waste” mean?

It describes a new phase in software development where AI and automation have made building fast, low-cost, and low risk, allowing teams to experiment more freely and learn faster.

Why does cheaper software matter for innovation?

When building is inexpensive, experimentation becomes affordable. Teams can test more ideas, learn from data, and refine products that actually work for people.

How does this change ROI in software development?

Traditional ROI measured delivery and cost efficiency. Evidential ROI measures learning, outcomes, and validated impact, value that grows with each iteration.

What are Return on Learning and Return on Ecosystem?

Return on Learning measures how quickly teams adapt and improve through cycles of experimentation. Return on Ecosystem measures how insights spread and create shared success across teams.

What’s the main takeaway for leaders?

AI and automation have changed the rules. The winners will be those who learn the fastest, not those who build the most.

Like the article? Share with friends: